Imagine that you’ve deployed AI to generate your technical documentation. The tool promised to revolutionize your content workflow, and honestly, it delivered on speed. What used to take days now happens in minutes.

Now, fast-forward six months to find customer support is drowning in confused user tickets. Social media mentions of your product are increasingly sarcastic. Sales is asking pointed questions about why adoption rates are dropping, and nobody can figure out what changed. The product is as solid as ever.

In this post, I want to provide a more optimistic outcome and follow up on a recent post that ended on a scenario that could lead to such a story.

The invisible problem

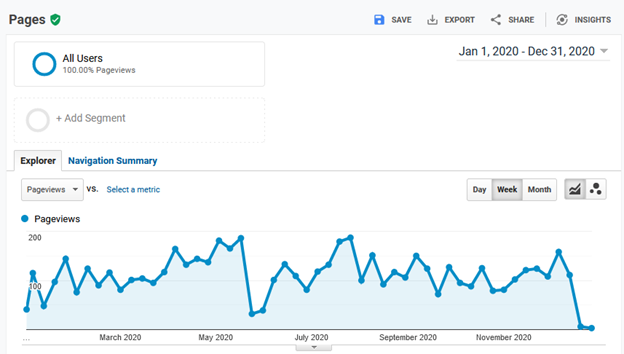

When you don’t have reliable documentation analytics, problems announce themselves through every channel except the actual source. Without reliable analytics, your first clue that AI is producing unhelpful developer docs won’t be a dashboard alert. It’ll be angry developers posting screenshots of your broken code examples on social media.

Remember, automating your processes accelerates them in whatever direction they were already heading. If your current documentation process and performance are unmeasured and reactive, you won’t know if AI is helping you out or not, until long after the damage has been done.

It just keeps producing content that checks all the boxes while serving no one.

A different path forward

The dystopian scenario above isn’t inevitable. But it requires resisting the “deploy AI everywhere immediately” impulse and taking a more methodical approach.

Continue reading “When AI fixes everything (except what matters)”