The past few days, I read a couple of articles on content metrics from the blogosphere: one had promise but ultimately indulged in some analytic slight-of-hand, while another actually made me smile and its focus on an solid methodology gave me hope.

Why is a solid methodology important? It’s the basis of your reputation and credibility. It’s the difference between knowing and guessing. These two articles reflect two examples of this.

- Measuring your “Return on Content”: How to tell whether your content is successful

- How metrics help us measure Help Center effectiveness

First, the one that had a sound approach, but some flawed measurement methods.

How metrics help us measure Help Center effectiveness

This article has promise, but trips and falls before the finish line. To its credit, it recommends:

- Setting goals and asking questions

- Collecting data

- Reviewing the data

- Reviewing goals and going back to #2

- Lather, rinse, repeat (as the shampoo suggests)

As a general outline, this is as good as they come, but the devil is in the details. If you’re in a hurry, just skip to the end or you can…

One of their goal-related questions sounds reasonable: Are visitors actually solving their problems?

Ideally, it would be connected to a higher-level business goal of improving customer satisfaction or something (and it might be, but the article doesn’t mention that). Nevertheless, it’s a reasonable question. Where they trip is in the way they operationalized the question.

After formulating the question you want an answer to, the next step is to figure out how to answer it in a process known as operationalization–making the question answerable. Ideally, the answer can be found directly; however, is often very difficult to ask a question that can be measured accurately without engaging in some iteration on the question and measurement method. In content, especially help content, the answers aren’t always that easy to measure directly so frequently proxies are used.

Proxies aren’t direct measure so they might not be as accurate as a direct measurement–you don’t know until you test them. They might, however, be accurate enough and so they are a popular (and valid) tool to use when direct measurements are not possible or are too costly to be practical.

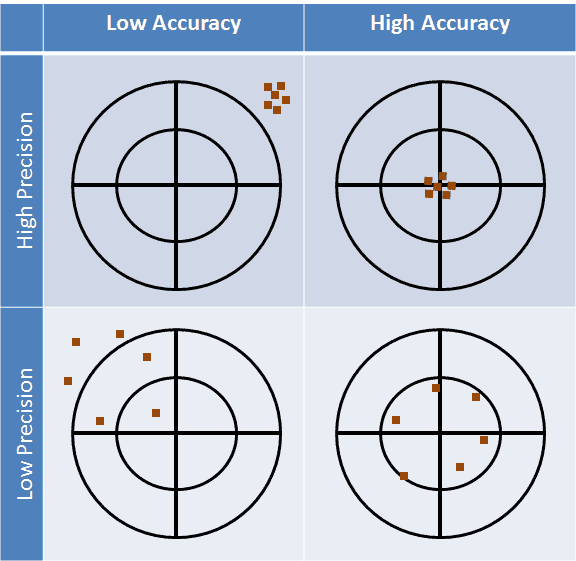

If you understand the system that you’re observing, you might identify some proxies that reflect the measurement you’re after and do so with the necessary precision and accuracy. Here’s a diagram to illustrate those two terms in case you’re a little rusty. Precision is the inverse of variance and accuracy is the distance from the actual value.

I mentioned accurate enough, because 100% accuracy and precision can be expensive to obtain and you might not need 100% of either to accomplish your goals. The critically important things to know about your metrics are:

- How much accuracy and precision do you need?

- How much accuracy and precision can you afford?

- How much accuracy and precision does your current system provide?

If you don’t know the answer to all of these questions about your measurements, you should probably not rely on decisions that result from them. You generally want measures that are as accurate and precise as you need to inform the decisions you want to make from the data. The value of those decisions is what will influence how much you can afford.

Practically, you need to iterate on the three factors to come up with what works for you. You might need more precision or accuracy than you can afford, for example. The question you then need to ask, is it worth it to pay for the extra precision or accuracy? Will that extra cost improve your decisions sufficiently–will you get decisions that earn more than they cost to make?

If you don’t know the answer to any of them, you shouldn’t be measuring and you should NOT use those measures to make business decisions. There’s evidence to support rolling the dice will be as effective, perhaps more so, as the decisions you base on such measurements.

Back to the article, it suggests some hypothetical proxies for their metrics. I say hypothetical because I have yet to see them supported by any credible empirical evidence (and I’ve seen them used for more than a decade). The article, for example, asks, “Are visitors actually solving their problems?” and answers that with a “self-service score” described in this page as:

…we calculated our Self-Service Score (SSS) by dividing the total number of unique visitors that interacted with help content by the total number of unique users with tickets. We defined ‘content interaction’ as someone who did more than just visit the Help Center landing page or navigate straight to a new ticket form.

OK, that’s a reasonable hypothesis, but was it verified or validated as being a sufficiently accurate and precise proxy for their decisions? You should not use untested hypothesis as a KPI. It could be tested and verified with some additional data, but they don’t describe any testing or validation in the article. My post on Reader goals–Reading to do now talks about the use case they describe and what makes it hard to measure from just web-based measurements.

Where I’ve seen such metrics go astray is by using such a metric to track changes that are smaller than the confidence band–changes that are literally “in the noise,” or statistically speaking, changes that are as likely to be the result of systemic noise as they are the result of something you or the customer did. However, because those three questions were never asked or answered, users of this type of metric have no way to know.

The article uses other hypothetical proxies as actual data such as Pages visited per session and page duration (time-on-page) as a proxy for whether the reader found what they were looking for. In fact, my research has shown that time-on-page does not relate to task success. These methods are creative, but methodologically unsound and, worse, of unknown precision and accuracy in the absence of validation. My series on Reader Goals talks about how to understand the user’s interaction with content via-a-vis the goals they want to accomplish to help understand how to collect more accurate data.

Measuring your “Return on Content”: How to tell whether your content is successful

I found this today and I liked their approach, in that they look at content goals in terms of what is the content supposed to do for the business. While this article did not go into the level of analytic detail as the article above, they method they take is more likely to produce results that achieve business goals.

The article starts by shining a bright light on the flawed (and often, unspoken) foundation of many help content metrics:

Effective content achieves a goal. While this may sound obvious, all too often the goal is misidentified:

- that the content is published

- that lots of people look at it

In these cases, content’s “success” could be misleading — reinforcing the creation of too much irrelevant content.

“Pageviews aren’t the goal. Your goal is the goal.” — Mike Powers, Director of Electronic Communications at Indiana University of Pennsylvania

There might be a lot of other metrics collected and presented in an elaborate analytics theater, but the bottom line is these are often, unfortunately, the most important metrics for many writers and the stakeholders to whom they report. Writers need to do better at educating their stakeholders.

This article approaches the content analytics quest with these steps:

- The content’s goal — aka, why are we publishing this?

- Make your goal measurable.

- Measure, tweak, repeat.

The only thing not stated explicitly, but still reflected in the overall approach, is to ensure the content goals you’re tracking and measuring are also influencing the larger business goals the content was written to support.

tl; dr: How to measure help content performance

This is where the rubber hits the road for many help topic authors and in true blog post fashion I’ll present the tips as a list of simplified (but hardly simple) steps:

- Ask questions and thoroughly understand the goal of EACH PAGE YOU WRITE! If you’re just a typist, then never mind and type away. If you’re a content professional, you have a professional obligation to understand and to help your stakeholders understand the value that each page brings to the company and how you can maximize that value.

- Be ready to say no to content. You might be able to deliver more value by NOT writing a topic. If you know that a page or set of pages will not add value, work with your stakeholders to understand why they’re not needed or that there is a better option. If a topic is not needed, spend your energy on content that will produce results. By just doing that, you’re adding value.

- Understand how to measure the value each page contributes to the goal and don’t settle for hypothetical proxies of that value. If you don’t have the tools to measure your value, get them! insist on them! You’re a content professional and it’s your reputation on the line.

- Don’t rest on best practices. Collect good data and use it to make good decisions.

In my Measuring Value topics, I talk about how different API topics add value and can be measured. Help content can add value to the business, but different content adds value in different ways and different content is measured differently. Reference topics are the toughest types of topics to understand because they, more than other types of help content, serve user goals that are not constrained to the web and their value can come long after they are written. For that reason, using any metric that includes page views to evaluate them is likely to be misleading. That being said, page views of reference topics can provide some qualitative data, but page views of reference topics are not likely to provide useful KPI-type data. Note that reference topics are not self-help topics.

Finally, I’ll reiterate and encourage that you don’t make real-world decisions from hypothetical (i.e. unproven or unverified) metrics. I learned this the hard way when I explained to a researcher how we (help content writers) measured content satisfaction based on the average time on page. They asked how I could know that time on page demonstrated satisfaction and I gave basically the same reasons the first article described.

Saying I was, “laughed out of the room” would be putting it politely.

Now I know why. Don’t let this happen to you.