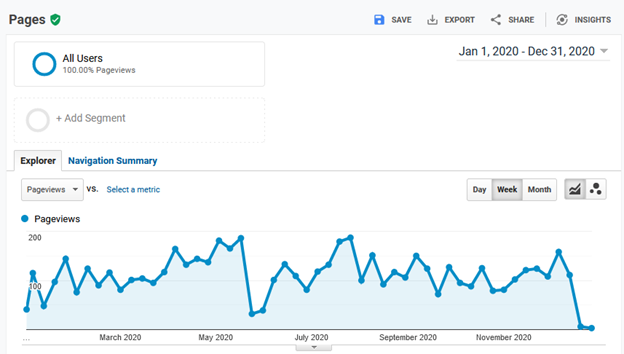

In all the product documentation projects I’ve worked on, a good feedback response rate to our help content has been about 3-4 binary (yes/no) feedback responses per 10,000 page views. That’s 0.03% to 0.04% of page views. A typical response rate has often been more like half of that. Written feedback has typically been about 1/10 of that. A frequent complaint about such data is that it’s not statistically significant or that it’s not representative.

That might be true, but is it useful for decision making?

Time for a short story

Imagine someone standing on a busy street corner. They’re waiting for the light to change to cross the street. It’s taking forever and they’re losing patience. They decide to cross. The person next to them sees that they’re about to cross, taps them on the shoulder, and says, “the light’s still red and the traffic hasn’t stopped.” Our impatient pedestrian points out, “that’s just one person’s opinion,” and charges into the crossing traffic.

Our pedestrian was right. There were hundreds of other people who said nothing. Why would anyone listen to just that one voice? If this information were so important, wouldn’t others, perhaps even a representative sample of the population, have said something?

Not necessarily. The rest of the crowd probably didn’t give it any thought. They had other things on their mind at the time and, if they had given it any thought at all, they likely didn’t think anyone would even consider the idea of crossing against the traffic. The crossing traffic was obvious to everyone but our impatient pedestrian.

Our poor pedestrian was lucky that even one person thought to tell them about the traffic. Was that one piece of information representative of the population? We can’t know that from this story. Could it have been useful? Clearly.

Such is the case when you’re looking at sparse customer feedback, such as you likely get from your product documentation or support site.

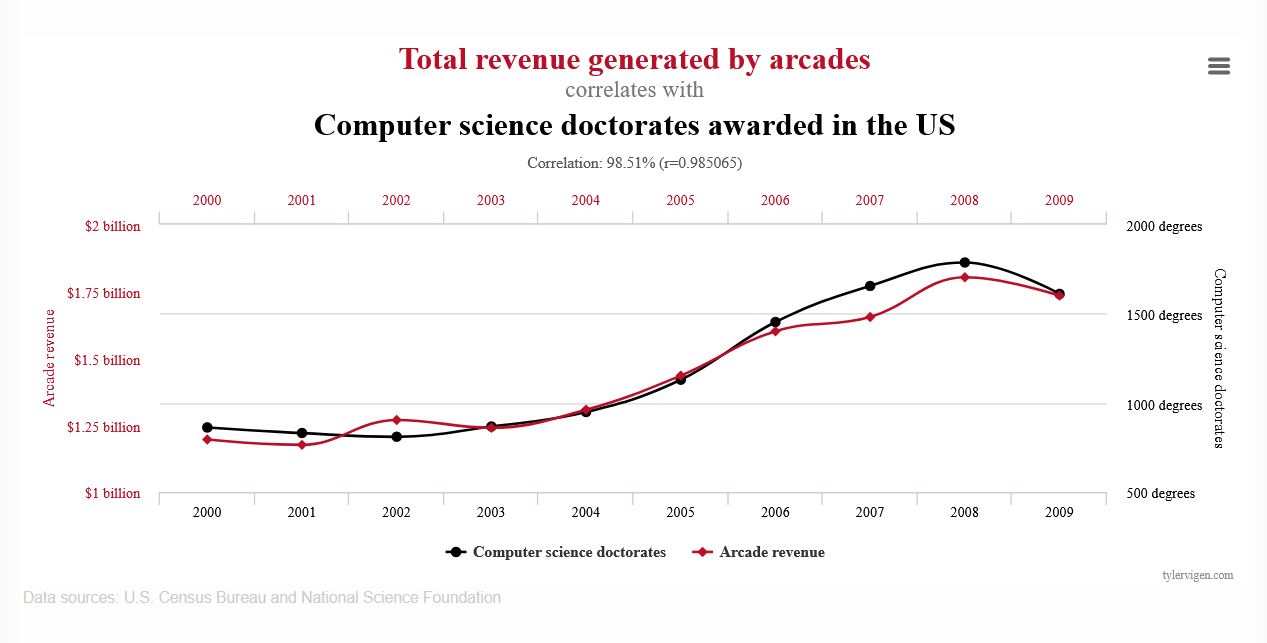

A self-selected sample of 0.03% is likely to be quite biased and not representative of all the readers (the population).

What you should consider, however, is: does it matter if the data is representative of the population? Representative or not, it’s still data—it’s literally the voice of the customer.

Let’s take a closer look at it before we dismiss it.

Understanding the limits of your data

Let’s consider what that one person at the corner or that 0.03% of the page views tell us.

- They don’t tell us what the population thinks. By not being statistically representative, we can’t generalize such sparse data to make assumptions about the entire population.

- The do tell us what the they think. We might not know what the population thought, but we know that 0.03% thinks.

The key to working with data is to not go beyond its limits. We know that this sparse data tells us what 0.03% of the readers thought, so what can we do with that?

Continue reading “If it’s not statistically significant, is it useful?”